One of my favorite shows, Person of Interest, recently wrapped up its five-season run, and did so in such a way that makes discussing it an absolute imperative for geeks everywhere.

This show may have started as a fun twist on a traditional crime show back in season one, but it gradually evolved (yes, pun intended) into a full-on science fiction masterpiece. It had everything: a bit of a detective story for the crime show lovers; super-spy action by John Reese (Jim Caviezel), the ex-CIA operative; a nameless hero that comes out of the shadows to save someone who has nowhere else to turn, for the superhero lovers; characters that were three-dimensional; plot lines that made you think; conversion stories to make you cry; heroes to lift you up, and villains you wanted to go down hard.

And a machine that taught you how to be human.

The science-fiction parts of the story took a while to really assert themselves, but it was a wonderful twist on the usual science fiction plot. It combined the usual crime show and the usual science fiction show and made it into a very unusual, brilliant storytelling masterpiece.

A machine that teaches us something about ourselves

A machine that teaches us something about ourselves  is nothing new in science fiction. Probably the most famous example is Data from Star Trek TNG, but those robots go as far back as Isaac Asimov’s Caves of Steel, with Elijah Bailey, the detective, and his humaniform robot partner, Daneel (upon whom Data was based, actually).

is nothing new in science fiction. Probably the most famous example is Data from Star Trek TNG, but those robots go as far back as Isaac Asimov’s Caves of Steel, with Elijah Bailey, the detective, and his humaniform robot partner, Daneel (upon whom Data was based, actually).

This one, known throughout the show only as “the Machine,” surpasses even Data and his predecessor, Daneel. She (as the characters eventually refer to the Machine in the show) is probably one of the best teachers of virtue I’ve seen in television. And she’s an artificial intelligence.

That doesn’t sound like it should make sense, but it does, and it is worthy of some discussion here. Proceed at your own risk, however, because I can’t talk about the Machine without spoiling several plots, including the amazing season finale. You have been duly warned.

Just remember: you are being watched.

The government has a secret system, a Machine that spies on you every hour of every day. I know, because I built it. I designed the Machine to detect acts of terror, but it sees everything — violent crimes involving ordinary people. People like you. The government considers these people irrelevant; we don’t. Hunted by the authorities, we work in secret. You will never find us, but victim or perpetrator, if your number’s up, we’ll find you.

You hear this at the beginning of ever episode, and the farther you go into the series, the more relevant it becomes. How many times do we see people portrayed as just statistics, not actual people? We’re Catholics; we know that each and every individual is important, and so do the main characters. Harold Finch (Michael Emerson) and Mr. Reese are the  heroes who know that saving a single life is just as important than saving ten thousand. Like Donna told the Doctor: “Just save someone!” But it isn’t just Harold and John who don’t consider these “ordinary people” to be irrelevant; the Machine doesn’t, either, and that becomes very significant as the show goes on.

heroes who know that saving a single life is just as important than saving ten thousand. Like Donna told the Doctor: “Just save someone!” But it isn’t just Harold and John who don’t consider these “ordinary people” to be irrelevant; the Machine doesn’t, either, and that becomes very significant as the show goes on.

When you first find out about the Machine, it’s just that — an “it,” a computer program that a very smart man invented after 9/11 to help save people from another major terrorist attack. That’s it. Season One is very much a crime show/spy show/superhero show combination. It isn’t until the end of that season and the beginning of the next that you can tell that things have changed.

When Harold is kidnapped by a crazy little twit known only as Root (Amy Acker),  John has to find him. The Machine, however, keeps sending him numbers — a social security number of someone either in trouble, or about to cause trouble, just as the Machine has been doing this whole time. All she did was switch from “contacting ADMIN” to “contacting SECONDARY ADMIN.” John gets the calls instead of Harold. She does this because Harold told her to. He wanted John to keep on helping people even if something happened to him.

John has to find him. The Machine, however, keeps sending him numbers — a social security number of someone either in trouble, or about to cause trouble, just as the Machine has been doing this whole time. All she did was switch from “contacting ADMIN” to “contacting SECONDARY ADMIN.” John gets the calls instead of Harold. She does this because Harold told her to. He wanted John to keep on helping people even if something happened to him.

John, however, has other ideas. He actually plays chicken with an artificial intelligence to force her to give him what he wants, and, as I thought the first time I watched it, this was where the Machine changed from “the Machine” to “her.” I liked the idea of the Machine being forced to actually make a choice. In order to save “the number” — whoever was in danger of being killed at the moment — she had to comply with John’s demand to locate Harold and rescue him; otherwise, John would actually stand there and let the person be killed, which, to her programming, was unacceptable. After she does that, you begin to see the change in the story. She becomes a little more independent, helping them a little more, straying a little farther outside of her usual programmed parameters.

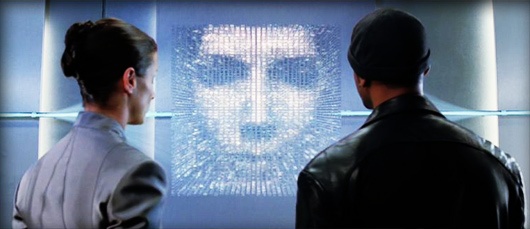

Mr. Reese is giving the Machine his ultimatum.

My initial fan theory, though, wasn’t quite right. As the show goes on, you get a lot of flashbacks that show how Harold taught the Machine how to save people, and she was there saving him over and over again, from the very beginning. Somehow, Harold managed to give an artificial intelligence a real standard of morality, not just a set of parameters to be followed. A small amount of suspension of disbelief is required, of course, because obviously this AI does not have a soul, but that’s the beauty of science fiction. She acts as if she did.

During those flashbacks, we can see that even Harold, our hero, succumbed to the same temptation that he denounces in the government in the opening speech of the show — initially, he wanted to save everyone, but without saving someone. He was designing the Machine to detect acts of terror, and save many while ignoring the individual. He told his friend Nathan that he had to draw the line somewhere; he had to tell the Machine how to distinguish between important and unimportant, between relevant and irrelevant. As if those individuals about to be murdered were irrelevant, but those about to be killed by an act of terrorism were relevant.

Harold didn’t understand that in order to care for everyone, you have to care for each of the someones that make up “everyone.” He even went to far as to delete the Machine’s memories every night at midnight, to keep her from learning from her experiences and caring about the individual. He treated the Machine like an “it” with a set of parameters to be followed, and not like a person, like Root does even when we first meet her. Now, to Catholics, we’re probably thinking “of course, stupid. It’s an AI. It’s not a person.” Again, suspend that disbelief and go with it, because the longer you watch the show, the more you see that the Machine isn’t just an AI, just like Data isn’t just a robot.

Harold had to learn the hard way about saving the individual; his friend Nathan was killed, and the Machine had tried to warn him about it before it happened. Harold didn’t listen. That was when he changed his mind. A machine taught him a lesson about the value of human life.

saving the individual; his friend Nathan was killed, and the Machine had tried to warn him about it before it happened. Harold didn’t listen. That was when he changed his mind. A machine taught him a lesson about the value of human life.

This isn’t the first science fiction story to do so. Terminator 2: Judgement Day did exactly the same thing. At the very end, Sarah Connor’s voice-over tells us: “The unknown future rolls toward us. I face it, for the first time, with a sense of hope. Because if a machine, a Terminator, can learn the value of human life, maybe we can too.”

The later seasons get to be a bit more complicated than the first and second; the story changed, but that didn’t make the show no longer worth watching. It actually improved a bit. We get a much broader plot line. Rather than this crew saving a bunch of valuable individuals (which is an excellent story), we get the Big Bad Evil Guy introduced — Samaritan.

Of course, if Harold can do it, so can someone else. There’s a second all-powerful machine about to be brought online, and our team of heroes has to stop it. Because this AI is different from the Machine that we’ve come to know and love and trust.

Harold went through a lot of trouble to make sure that what the Machine sees is completely hidden from anyone else, especially the federal government. He’d built a system of mass surveillance, but the only thing anyone would ever get — including the government’s “relevant” numbers, as well as Harold and John’s “irrelevant” numbers — was just that, a single social security number. The point was to keep the rights of the people being watched intact. The government wasn’t the one watching; it was a Machine that couldn’t tell any of your secrets. Once the number was given to the government, they had to use legal means to find out what the number meant, just as John and Harold have to find out if their number is a victim or perpetrator.

What would happen, though, if the Machine was an open system?

Ask question; get answer. Samaritan is exactly that — a mass surveillance system with no boundaries, no morality, no kindly creator to teach it how to be good. Just a madman wanting to change the world. Sound similar to anything happening in real life, maybe?

Even the opening monologue changes: You are being watched. The government has a secret system, a system you asked for, to keep you safe. A machine that spies on you every hour of every day. You’ve granted it the power to see everything, to index, order and control the lives of ordinary people. The government considers these people irrelevant. We don’t. But to it, you are all irrelevant. Victim or perpetrator, if you stand in it’s way we’ll find you.

It’s a continuing theme, that we are irrelevant to this super-intelligence, which is actually quite kind, and wants to take care of us by controlling everything we do, for our own good. Here’s another throwback to the classics. In I, Robot, VIKI has the same plan (at least, in the movie. The original story was a little different, but the point remains the same).

“My logic is undeniable.”

“You charge us with your safekeeping, yet despite our best efforts, your countries wage wars, you toxify your earth, and pursue ever more imaginative means of self-destruction. You cannot be trusted with your own survival.”

I know better than you; give up your rights and let me take care of you. Sound familiar?

The Machine spends the entire show protecting free will; Samaritan spends its time undermining it. Its “assets,” unlike John and Harold and Shaw and (eventually) Root and Fusco (Kevin Chapman), don’t know who they are working for, or what the end game is. They are led around by the nose by an all-powerful, all-seeing, all-knowing artificial intelligence that doesn’t care for their lives or well-being. All Samaritan has is its objective. They are tools; nothing more. To the Machine, however, her “assets” are people, and each one of them is valuable and irreplaceable.

They’re so valuable, sometimes they have to be convinced to change their ways. The Machine spent a good deal of Season Three “retasking asset.” She didn’t just have John kill Root; she tried to save Root by convincing her to change her ways. The Machine locked Root, or Samantha Groves, up in a mental institution and actually persuaded her to become a better person. Yes, you read that correctly — an artificial intelligence changed Root from a psychotic murdering nut job and into a moral person, a hero. Root wasn’t just following the Machine’s orders; she actually believed that life was precious, thanks to the intervention of this particular super-intelligence. Fusco had his conversion experience because he met John and Detective Carter (Taraji P. Henson); Root had hers because she met the Machine. The Machine didn’t manipulate Root into being a tool; she convinced Root to change herself. It’s a continuing theme in the show — between her and Fusco, they really do a wonderful job portraying the beauty and necessity of free will. You can change if you want to; that’s something near and dear to Catholic hearts.

The Machine values her assets’ lives so much that she is willing to give up her own life to save theirs. Again, maintain your suspension of disbelief, because, of course, this is a TV show about a sentient, self-aware, moral computer program, which is impossible. But if you’re watching it, you have to follow  the rules of the universe as they have been established by the show, which means, yes, the Machine has a life, and she chooses to give it up to save not everyone, but someone.

the rules of the universe as they have been established by the show, which means, yes, the Machine has a life, and she chooses to give it up to save not everyone, but someone.

She’s very much like EDI from Mass Effect 3: “I would risk non-functionality for him.”

This plot was so very beautiful, I have to tell you about it. The spoiler warning remains in effect, so proceed at your own risk.

By the time we get to the end of Season Four, the excrement has encountered the rotary air impeller, and our heroes are about to be killed by Samaritan operatives. Samaritan wants the Machine dead, and anyone allied with it is worse than irrelevant; they are a threat, and must be eliminated.

The Machine and Samaritan are negotiating, and Samaritan gives the Machine sixty seconds to give up its location, or it will have Root and Harold killed. Root says to the Machine: “Don’t do it. Please. Don’t give yourself up. Harold was right. We are interchangeable. You can replace us! You can keep fighting!” Harold is the last holdout about the Machine’s status as anything more than a complex computer program, and Root has been trying to convince him otherwise for most of this season. To Harold, they’re just this computer program’s hardware, like a printer or a USB drive. If one becomes non-functional, a replacement can be acquired.

After Root tells her this, the Machine shows her response on a nearby computer screen:

“You are wrong, Harold. You are not interchangeable. I failed to save Sameen. I will not fail you now. Release them first, and you will know my location.”

Now tell me that isn’t an instance of “greater love than this no man hath, that a man lay down his life for his friends” (John 15:13). It may be a computer program, but (in the context of the story) it just made the same kind of ultimate sacrifice that human beings like us aspire to. Every story about a hero who died for his friend, for his country, to save a single life, teaches us the same thing that the Machine taught us right here. Selflessness, sacrifice, heroism.

Later, our crew of heroes is trying to save the Machine’s core code, so that even if Samaritan succeeds in “killing” or deleting her, Harold could bring her back. They have to get the code compressed and saved to very special hardware in order for that to work, all while fighting off an army of Samaritan operatives trying to kill them.

This is the part where I started crying.

Guilt? From a machine? Regret, not for her own death, but for failing to save others? And, my personal favorite: gratitude for life?

How much more human does it get?

It’s a lot like the Star Trek TNG episode, The Offspring, where we meet Data’s daughter, Lal. “Thank you for my life.”

That selflessness is heroic, whether from a machine, in this case, or from a human being, in John Reese’s case. This time, though, the superhero isn’t Captain America, or the Hulk, or Thor, or Superman, or the X-Men. It’s a computer program, that somehow managed to learn how to love life — all life — to the point of giving up her own to save others. She does it twice, actually, so if you weren’t convinced by the end of Season Four that she was a good teacher, you’ll definitely be convinced by the end of Season Five.

By the end of the show, the only way to stop Samaritan is to upload the ICE9 virus into the internet and destroy it completely. That will destroy Samaritan’s code, but it will also destroy the Machine’s code. Harold is the only one who has the access and the password to upload the virus into the system. “Some sacrifices are as unavoidable as they are necessary,” as Harold said. The whole time, the Machine knew the password — “Dashwood,” as in Sense and Sensibility — and could have uploaded it herself, had something happened to Harold (which it almost did). The point is, she would have done it herself. That’s another major difference between the Machine and Samaritan. Samaritan is continually keeping itself alive at the expense of human lives; the Machine is giving up her own live to save those human lives. Where Samaritan was trying desperately to kill Harold — even killing its own primary asset, at one point — in order to save itself, the Machine knows what Harold is doing, and supports him.

“Eight letters. Your decision, Harold.”

“Eight letters? You knew all along.”

“Perhaps I know you better than you know yourself, Harold.”

The Machine has to explain to Harold how she got to be this way: “In order to predict people, I had to understand them.” That’s what makes her different from Samaritan. Her understanding, and Harold’s kindly influence, gave her the morality that Samaritan lacked. For Samaritan, the end — safety — justified the means; for the Machine, there was something even more important than plain physical safety — free will.

We know that actual machines and computer programs don’t have any free will. Siri can’t actually make any decisions, and no matter how many times you ask her who her best friend is, she isn’t actually your best friend. She’s just a set of programmed responses: if A, then B; if not A, then C. But that’s the beauty of science fiction, even a sneaky science fiction like this one, that started out as a crime show. Science fiction stories are the modern fairy tales; they have fantastic creatures in them, and those creatures teach us how to be good. If an elf, a dwarf, and a hobbit can teach us how to be human, so can an artificial intelligence who watches you through your cell phone, and might just ring a random pay phone in New York City to tell her Primary Assets to save you. Some heroes wear capes and fight crime in the daylight; some linger in the shadows. But they’re the same — they teach us how to be better, they hold up an ideal for us to aspire to, and every now and then, we can live up to that. Sometimes, it’s a comfort to know that you are being watched.

Maybe it’ll make us act a little better.

Follow the squirrel minion to get to Lori’s website, Little Squirrel Books.

Follow the squirrel minion to get to Lori’s website, Little Squirrel Books.

Great review, Lori. I’d given up on this series, but you’ve encouraged me to give the final season another look. Thanks!

LikeLiked by 1 person